Check the demo at: https://huggingface.co/spaces/MatheusCavini/Heptapod-B_Generator_Text2Logogram

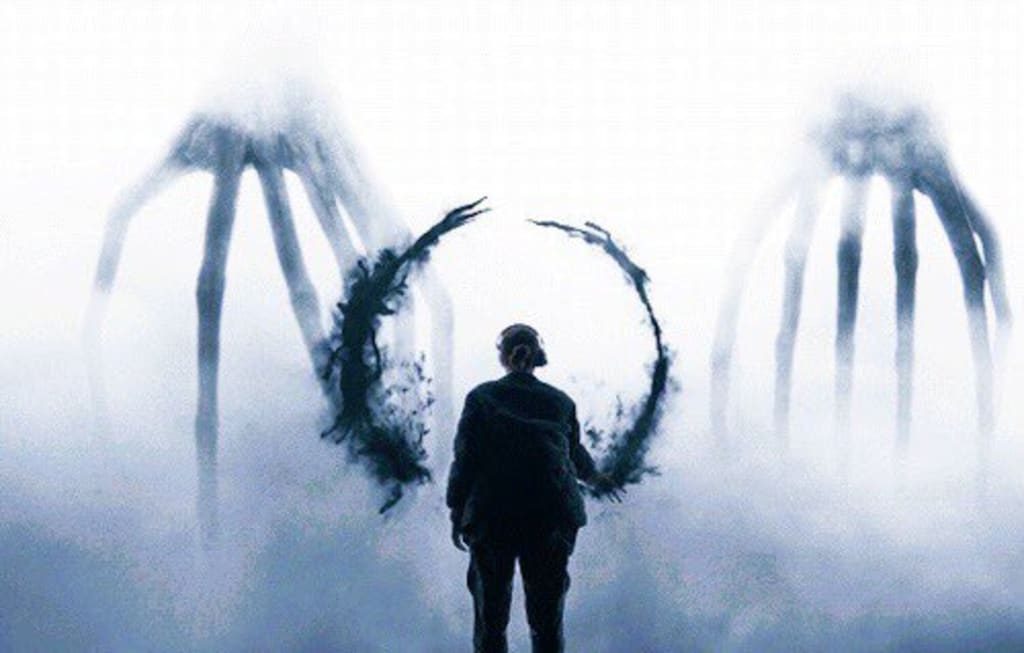

For those who are unfamiliar with what this notebook might be about, Heptapod-B is the name of the written language spoken by the aliens named Heptapods, in the movie Arrival (2016).

The language is described as being non-linear, time-independent and semasiographic, meaning the logograms represent concepts and have no connection to phonems of the spoken form.

If you want to know more about how the language is structured, and what are its inconsistences (since it is a fictional work, not a real language), I strongly recommend watching this video from 'Language & Film'. It might provide some insight on some ideas used for data augmentation and evaluating results, as well as on the limitations of this project (again, keep in mind it is a fictional language, so results can only go as far as its creation goes).

In the movie, the linguist Louise is tasked with deciphering an alien language in order to establish communication with the Heptapods. Her approach involves presenting concepts — such as objects, actions, abstract ideas, and full sentences — and observing the corresponding logograms produced by the aliens. Over time, she builds a partial lexicon and begins to understand the structure of their written language, identifying recurring patterns and possible morphemes. This handcrafted process enables her not only to interpret, but also to construct new logograms.

While effective in the film, this method is fundamentally manual and relies heavily on the linguist's own insight to uncover the language’s structure and generative principles.

But what if we could automate that process?

In the era of large language models, GPTs, and powerful image generation tools, it's natural to wonder: Could a model be trained to learn the Heptapod-B language — not by decoding it manually — but by learning directly from pairs of English sentences and their corresponding logograms, as Louise might have collected?

This project explores that idea: to build a multimodal system capable of understanding the latent structure of Heptapod-B, and generate novel logograms directly from English sentences made of the vocabulary presented in training.

In short: let’s teach a machine to think like Louise.

Since the language is fictional, the dataset for Heptapod-English correspondences is fairly small and restrict to some sentences and vocabulary used in the movie or developed in early experimental stages, which might be a problem as training language models usually requires lots of data. But I tried to do the best with what I had.

So, the dataset I used for training my model is the one avaiable at this GitHub repo, which consists of 38 images at 3300x3300 resolution, named with the meaning of the logogram. This "dictionary" was created by some people from Wolfram who worked in the development of a software for the movie, so it might be considered (to a certain level) an official source of information.

Now, 38 pairs of Logograms-Sentences is unfortunately a very small amount of data. In order to try to improve that a little bit, I applied two manual techniques for data augmentation:

- Merging morphems with known meanings together to create new meaningful sentences. For exemple: joining "Heptapod" and "Walk" to create "Heptapod walk"

- Isolating morphems from sentences to create a logogram of an isolated concept. For example: removing the morphem for "Abbott" from "Abbott is dead" to create a logogram for "is (to be) dead"

I believe it is important to make a disclaimer at this point: this operations are basically what we expect the final model to be able to perform - creating new logograms using patterns from the learned ones. So is it counterintuitive to do them manually for training? Perhaps. However, as I said before, I needed more training data. So I went through the process of making a small amount of this by hand, to train a model that can (hopefully) do this by itself later.

Another step for data augmentation was to perform image trasformations on the logograms to introduce some variation. For that, I created 100 variations for each image (original + 99), with the following transformations:

- Making the image binary (black and white)

- Resizing the image to 224x224 (ResNet standard, even though I am not using it)

- Applying a random rotation of up to 10º

- Applying a random translation of up to 5% in each dimension

- Applying a random scaling of up to $\pm$5%

After doing all this, I ended up with a dataset of 49 logogram-sentence correspondences, with each logogram having 100 224x224 image variations, totalizing 4900 images to train the model on. This dataset can be found attached on this Kaggle workspace. The captions.txt file has the captions for each differente logogram, and matching them to all the images is further done in code:

000.png | Abbot is dead

001.png | Abbot

002.png | Abbot chooses save humanity

003.png | Abbot chooses save Ian&Louise

004.png | Abbot solve

005.png | Before and After

The dataset can than be loaded using the following code:

from PIL import Image

import torch

from torch.utils.data import Dataset, DataLoader

from torchvision import transforms

import os

class TextToImageDataset(Dataset):

def __init__(self, image_dir, captions_file, transform=None):

self.image_dir = image_dir # Path for the images on the dataset

self.transform = transform

self.pairs = [] # Array to store (image, sentence) pairs

with open(captions_file, "r") as f:

for line in f:

idx, caption = line.strip().split("|")

idx = idx.strip().split(".")[0]

caption = caption.strip()

for i in range(100):

img_file = f"{(int(idx)*100 + i):04d}.png" # Get the image number by doing idx*100 + i

self.pairs.append((caption, img_file)) # Apply the same caption for every variation of the same logogram

def __len__(self):

return len(self.pairs)

def __getitem__(self, idx):

text, img_file = self.pairs[idx]

image = Image.open(os.path.join(self.image_dir, img_file)).convert("RGB")

if self.transform:

image = self.transform(image)

return text, image #item = (text, image)# Make sure that images are resized to 224x224 and convert to tensor

transform = transforms.Compose([

transforms.Resize((224, 224)),

transforms.ToTensor()

])

base_dir = "/kaggle/input/heptapod-dataset/dataset/"

dataset = TextToImageDataset(image_dir=base_dir+"images",captions_file=base_dir+"captions.txt", transform=transform)We can check an example of a datapair loaded to dataset:

import matplotlib.pyplot as plt

from random import randint

def show_tensor_image(t, text = ""):

t = t.squeeze().detach().cpu()

if t.shape[0] == 1:

plt.imshow(t[0], cmap="gray", title=title)

else:

plt.imshow(t.permute(1, 2, 0)) # [C, H, W] → [H, W, C]

if text !="":

plt.title(text)

plt.axis("off")

ex_caption, ex_img = dataset[randint(0,4900)]

show_tensor_image(ex_img, ex_caption)As previously explained, the logograms are composed by morphems, which have a particular geometry, trace and other visual characteristics.

The first goal for a model that tries to generate logograms would be, then, to understand this geometrical features. For that, I trained a

If that is succesfully achieved, that means we have a model that understands the overall structure of the logograms and its morphems, learning how to encode its visual features into the lower dimensional representation and using it to reconstruct the image.

The architecture for the

import torch.nn as nn

import torch.nn.functional as F

class ConvVAE(nn.Module):

def __init__(self, latent_dim=128):

super().__init__()

self.latent_dim = latent_dim

# Encoder: Conv layers → flatten → μ, logσ²

self.encoder = nn.Sequential(

nn.Conv2d(3, 32, 4, stride=2, padding=1), # 112x112

nn.ReLU(),

nn.Conv2d(32, 64, 4, stride=2, padding=1), # 56x56

nn.ReLU(),

nn.Conv2d(64, 128, 4, stride=2, padding=1), # 28x28

nn.ReLU(),

nn.Conv2d(128, 256, 4, stride=2, padding=1), # 14x14

nn.ReLU(),

nn.Flatten()

)

self.fc_mu = nn.Linear(256 * 14 * 14, latent_dim)

self.fc_logvar = nn.Linear(256 * 14 * 14, latent_dim)

# Decoder: z → fully connected → ConvTranspose

self.fc_decode = nn.Linear(latent_dim, 256 * 14 * 14)

self.decoder = nn.Sequential(

nn.Unflatten(1, (256, 14, 14)),

nn.ConvTranspose2d(256, 128, 4, stride=2, padding=1), # 28x28

nn.ReLU(),

nn.ConvTranspose2d(128, 64, 4, stride=2, padding=1), # 56x56

nn.ReLU(),

nn.ConvTranspose2d(64, 32, 4, stride=2, padding=1), # 112x112

nn.ReLU(),

nn.ConvTranspose2d(32, 3, 4, stride=2, padding=1), # 224x224

nn.Sigmoid() # To keep output in [0,1]

)

def reparameterize(self, mu, logvar):

std = torch.exp(0.5 * logvar)

eps = torch.randn_like(std)

return mu + eps * std

def forward(self, x):

encoded = self.encoder(x)

mu = self.fc_mu(encoded)

logvar = self.fc_logvar(encoded)

z = self.reparameterize(mu, logvar)

decoded = self.decoder(self.fc_decode(z))

return decoded, mu, logvardef vae_loss(recon_x, x, mu, logvar):

beta = 1.0

recon_loss = F.binary_cross_entropy(recon_x, x, reduction='sum')

kl_div = -0.5 * torch.sum(1 + logvar - mu.pow(2) - logvar.exp())

return recon_loss + beta * kl_divThe model is trained to minimize the reconstruction loss between the input and the output image. After some experimentation, a latent space of 256 dimensions was found to offer a good balance between representation capacity and reconstruction fidelity. This dimensionality seems to be sufficient to capture meaningful structure in the logograms without leading to overfitting or unnecessary complexity.

from torch import optim

from tqdm import tqdm

#Load the dataset

loader = DataLoader(dataset, batch_size=100, shuffle=True)

# Training loop

device = "cuda" if torch.cuda.is_available() else "cpu"

vae = ConvVAE(latent_dim=256).to(device) #Train for a latent representation that is 256-dimensional

optimizer = optim.Adam(vae.parameters(), lr=1e-3)

for epoch in range(60):

vae.train()

total_loss = 0

for _, img in tqdm(loader): # Gets only the image from the dataset

img = img.to(device)

recon, mu, logvar = vae(img)

loss = vae_loss(recon, img, mu, logvar)

optimizer.zero_grad()

loss.backward()

optimizer.step()

total_loss += loss.item()

print(f"Epoch {epoch+1}, Loss: {total_loss / len(loader.dataset):.4f}")torch.save(vae.state_dict(), "vae_trained_beta4_d1024.pth")Lets test how the VAE reconstructs an example image, to see if it has successfully learned a meaningful latent representation:

vae.eval()

idx = randint(0, 4900)

img = Image.open(base_dir+f"images/{idx:04d}.png").convert("RGB")

img = transform(img).unsqueeze(0).to(device)

with torch.no_grad():

recon, mu, _ = vae(img)

plt.figure(figsize=(8, 4))

plt.subplot(1, 2, 1)

plt.title("Input")

show_tensor_image(img)

plt.subplot(1, 2, 2)

plt.title("Reconstruction")

show_tensor_image(recon)

plt.show()In case you want to skip the training process for the VAE, run the cells below to load the parameters I have trained before.

loader = DataLoader(dataset, batch_size=100, shuffle=True)

device = "cuda" if torch.cuda.is_available() else "cpu"

print("Using Device: "+str(device))

vae = ConvVAE(latent_dim=256)

vae.load_state_dict(torch.load("/kaggle/input/trained-vae/pytorch/default/1/vae_trained_2.pth", map_location=device))

vae.eval().to(device) Using Device: cuda

ConvVAE(

(encoder): Sequential(

(0): Conv2d(3, 32, kernel_size=(4, 4), stride=(2, 2), padding=(1, 1))

(1): ReLU()

(2): Conv2d(32, 64, kernel_size=(4, 4), stride=(2, 2), padding=(1, 1))

(3): ReLU()

(4): Conv2d(64, 128, kernel_size=(4, 4), stride=(2, 2), padding=(1, 1))

(5): ReLU()

(6): Conv2d(128, 256, kernel_size=(4, 4), stride=(2, 2), padding=(1, 1))

(7): ReLU()

(8): Flatten(start_dim=1, end_dim=-1)

)

(fc_mu): Linear(in_features=50176, out_features=256, bias=True)

(fc_logvar): Linear(in_features=50176, out_features=256, bias=True)

(fc_decode): Linear(in_features=256, out_features=50176, bias=True)

(decoder): Sequential(

(0): Unflatten(dim=1, unflattened_size=(256, 14, 14))

(1): ConvTranspose2d(256, 128, kernel_size=(4, 4), stride=(2, 2), padding=(1, 1))

(2): ReLU()

(3): ConvTranspose2d(128, 64, kernel_size=(4, 4), stride=(2, 2), padding=(1, 1))

(4): ReLU()

(5): ConvTranspose2d(64, 32, kernel_size=(4, 4), stride=(2, 2), padding=(1, 1))

(6): ReLU()

(7): ConvTranspose2d(32, 3, kernel_size=(4, 4), stride=(2, 2), padding=(1, 1))

(8): Sigmoid()

)

)

By training the VAE, we have a model capable of generating a logogram from its latent representation.

Therefore, we now need a model that converts the text associated to the logogram into that proper latent representation.

The first step is to create a tokenizer for the captions, so that the words in training vocabulary are converted into tokens can be used as inputs for the model. The tokenizer proposed simply learns the vocabulary present in the training captions and transforms this vocabulary into indexes, that are used as tokens.

class Vocab:

def __init__(self):

self.word2idx = {"<PAD>": 0, "<UNK>": 1}

self.idx2word = ["<PAD>", "<UNK>"]

def build(self, sentences):

for sent in sentences:

for word in sent.lower().split():

if word not in self.word2idx:

self.word2idx[word] = len(self.idx2word)

self.idx2word.append(word)

def encode(self, sentence):

return [self.word2idx.get(w, 1) for w in sentence.lower().split()]

def __len__(self):

return len(self.idx2word)vocab = Vocab()

vocab.build([caption for caption,_ in dataset])

print("The vocabulary learned from the data has "+str(len(vocab))+" elements.")The vocabulary learned from the data has 51 elements.

Then, we need to define a model responsible for "digesting" the tokens of the captions sequentially and producing a final vector with the same dimensions of the latent representation learned by the VAE. The architecture chosen for this is a Gated Recurrent Unit (GRU), a especial type of Recurrent Neural Network (RNN).

The first layer of the model is an Embedding layer, which is responsible for converting the token indexes first into a one-hot encoding and than into a dense embedding representation of dimension embed_dim, which is also learned in the training process.

The embeddings of each token are then sequentially fed to the GRU model, which is trained to generate a latent representation.

class GRU(nn.Module):

def __init__(self, vocab_size, embed_dim, latent_dim):

super().__init__()

self.embed = nn.Embedding(vocab_size, embed_dim)

self.rnn = nn.GRU(embed_dim, latent_dim, batch_first=True)

self.fc = nn.Linear(latent_dim, latent_dim)

def forward(self, token_ids):

embedded = self.embed(token_ids)

_, hidden = self.rnn(embedded)

return self.fc(hidden.squeeze(0))In the training loop for the GRU, we use the tokenized sequence for each caption as input and the output latent representation of the corresponded logogram calculated by the encoder part of the VAE as target, since the goal is having the GRU to learn this representation.

from torch.nn.utils.rnn import pad_sequence

gru = GRU(len(vocab), embed_dim=256, latent_dim=256).to(device) # latent_dim of the output must be the same from the VAE

optimizer = torch.optim.Adam(gru.parameters(), lr=1e-3)

loss_fn = nn.MSELoss()

# Training Loop

for epoch in range(30):

gru.train()

total_loss = 0

for captions, images in loader:

# Tokenize the captions

token_tensors = [torch.tensor(vocab.encode(c), dtype=torch.long) for c in captions]

token_batch = pad_sequence(token_tensors, batch_first=True, padding_value=0).to(device)

images = images.to(device)

with torch.no_grad():

_, mu, logvar = vae(images)

# Compute expected latent representation for the associated logogram

z_target = mu

z_pred = gru(token_batch)

loss = loss_fn(z_pred, z_target)

optimizer.zero_grad()

loss.backward()

optimizer.step()

total_loss += loss.item()

print(f"Epoch {epoch+1}, Loss: {total_loss / len(loader):.4f}")torch.save(gru.state_dict(), "gru_trained.pth")In case you want to skip the training process for the GRU, run the cells below to load the parameters I have trained before.

vocab = Vocab()

vocab.build([caption for caption,_ in dataset])

gru = GRU(len(vocab), embed_dim=256, latent_dim=256).to(device)

gru.load_state_dict(torch.load("/kaggle/input/gru-trained/pytorch/default/1/gru_trained.pth", map_location=device))

gru.eval().to(device)GRU(

(embed): Embedding(51, 256)

(rnn): GRU(256, 256, batch_first=True)

(fc): Linear(in_features=256, out_features=256, bias=True)

)

Finally, if we concanate the 2 trained models so that:

- A text is fed as input of the GRU and converted into a latent representation

- The latent representation is fed as input of the decoder part of the VAE and used to generate a logogram

We have a complete pipeline for a model that generates logograms from text!

def generate_logogram(text, gru, vae, vocab, device="cpu"):

gru.eval()

vae.eval()

with torch.no_grad():

#Transform text into token sequence

token_ids = torch.tensor([vocab.encode(text)], dtype=torch.long).to(device)

#Compute latent representation using GRU model

z = gru(token_ids)

#Generate a new logogram using the VAE-decoder

img = vae.decoder(vae.fc_decode(z)).squeeze(0).cpu().permute(1, 2, 0).numpy()

plt.imshow(img)

plt.title(text)

plt.axis("off")

plt.show()text_to_generate = "Earth is dead"

generate_logogram(text_to_generate, gru=gru, vae=vae, vocab=vocab, device=device)The combination of a

Interestingly, this behavior is not entirely undesirable. In fact, the soft, diffuse appearance resembles the way logograms are depicted in Arrival, emerging as fluid, ink-like structures rather than crisp, hand-drawn symbols. From a stylistic perspective, this can be seen as a feature rather than a flaw.

However, if the goal is to recover sharper, more discrete logograms, closer to the training data, a simple post-processing step can be applied: image binarization through thresholding.

By applying a threshold to the pixel intensities, we can force the output to take on only two values (black and white), effectively removing intermediate gray levels and sharpening edges.

def generate_logogram_binary(text, gru, vae, vocab, device="cpu", threshold=0.5):

gru.eval()

vae.eval()

with torch.no_grad():

# Text -> tokens

token_ids = torch.tensor(

[vocab.encode(text)],

dtype=torch.long

).to(device)

# Text -> latent vector

z = gru(token_ids)

# Decode image

img = vae.decoder(vae.fc_decode(z)).squeeze(0).cpu()

# Convert RGB -> grayscale

img_gray = img.mean(dim=0)

# Threshold

img_binary = (img_gray > threshold).float().numpy()

plt.figure(figsize=(5, 5))

plt.imshow(img_binary, cmap="gray")

plt.title(text)

plt.axis("off")

plt.show()text_to_generate = "Earth is dead"

generate_logogram_binary(text_to_generate, gru=gru, vae=vae, vocab=vocab, device=device, threshold=0.5)One of the most visually striking aspects of Heptapod-B in Arrival is the way logograms are not simply written, but materialize and morph fluidly, emerging from an inky fog and transitioning seamlessly as meaning unfolds. This dynamic, almost continuous transformation suggests a representation of language that is fundamentally different from discrete symbol sequences.

Interestingly, our model exhibits a related behavior due to the structure of its learned latent space.

After training the pipeline — where text is mapped to a latent vector and then decoded into a logogram — each sentence corresponds to a point in a continuous latent space. Because this space is learned through a VAE, it is structured and smooth, meaning that nearby points in the latent space tend to decode into visually similar logograms.

This property allows us to explore latent space interpolation.

Given two sentences, we can:

- Encode each sentence into its corresponding latent vectors

$z_1$ and$z_2$ - Interpolate between them using a linear combination: $$ z_\alpha = (1-\alpha)\cdot z_1 + \alpha \cdot z_2, \space \space \alpha \in [0,1]$$

- Decode each intermediate vector

$z_\alpha$ into an image using the VAE decoder.

Because the latent space is continuous, the decoded images form a sequence of gradual visual transitions, where each generated logogram is a slight variation of the previous one. When these frames are combined into an animation, the result is a smooth morphing effect, with no abrupt discontinuities, transitioning from one logogram to another.

While this behavior emerges from the mathematical properties of the model rather than an explicit design choice, it provides a compelling parallel to the fluid, evolving nature of Heptapod-B as depicted in the film.

Below, follows the implementation of the interpolation metioned, including a GIF generation.

import torch

import matplotlib.pyplot as plt

import numpy as np

from matplotlib.animation import FuncAnimation, PillowWriter

def animate_logogram_interpolation(text1, text2, model, vae, vocab, device="cpu", steps=30, save_path="interpolation.gif"):

model.eval()

vae.eval()

with torch.no_grad():

# Encode texts into embeddings

t1 = torch.tensor([vocab.encode(text1)], dtype=torch.long).to(device)

t2 = torch.tensor([vocab.encode(text2)], dtype=torch.long).to(device)

z1 = model(t1)

z2 = model(t2)

# Linear interpolation between 2 vectors, keeping first and last still for 10 frames

interpolated_z = [z1]*10

interpolated_z += [

z1 * (1 - alpha) + z2 * alpha

for alpha in np.linspace(0, 1, steps)

]

interpolated_z += [z2]*10

# Generate 1 image for each interpolated point

images = [

vae.decoder(vae.fc_decode(z)).squeeze(0).cpu().permute(1, 2, 0).numpy()

for z in interpolated_z

]

# Build Animation

fig, ax = plt.subplots()

im = ax.imshow(images[0])

ax.axis("off")

def update(frame):

im.set_data(images[frame])

return [im]

anim = FuncAnimation(fig, update, frames=len(images), interval=100, blit=True)

# Save as a GIF

anim.save(save_path, writer=PillowWriter(fps=24))

print(f"GIF saved as: {save_path}")

plt.close()animate_logogram_interpolation(

"Heptapod purpose Earth ?",

"Offer Weapon",

model=gru,

vae=vae,

vocab=vocab,

device=device, # or "cpu"

steps=100,

save_path="logogram_morphing.gif"

)GIF saved as: logogram_morphing.gif

from IPython.display import HTML

print("[CHECK THE OUTPUT FILES FOR LOGOGRAM_MORPHING.GIF IF IT DOES NOT RENDER HERE]")

HTML('<img src="logogram_morphing.gif">')[CHECK THE OUTPUT FILES FOR LOGOGRAM_MORPHING.GIF IF IT DOES NOT RENDER HERE]

One of the main limitations of the initial model was its inability to handle out-of-vocabulary (OOV) words. Since the vocabulary was built exclusively from the training dataset — which is already very small — any unseen word at inference time would be mapped to a generic <UNK> token. This effectively collapses all unknown words into the same representation, making it impossible for the model to distinguish between them.

To address this, I explored the use of pre-trained word embeddings, specifically GloVe (Global Vectors for Word Representation). Unlike embeddings learned from scratch, GloVe vectors are trained on large text corpora and capture rich semantic relationships between words. This allows the model to leverage prior knowledge about language, even for words that never appeared in the training dataset.

Instead of learning embeddings solely from the limited dataset, we:

- Initialize the embedding layer with GloVe vectors

- Expand the vocabulary to include all words present in GloVe

- Allow the model to map semantically similar words to similar latent representations

This enables the model to generalize beyond the training data — at least in a semantic sense.

!wget http://nlp.stanford.edu/data/glove.6B.zip

!unzip -q glove.6B.zipglove_path = "glove.6B.100d.txt"

glove_words = set()

with open(glove_path, encoding="utf-8") as f:

for line in f:

word = line.split()[0]

glove_words.add(word)class Vocab:

def __init__(self, glove_words):

self.word2idx = {"<PAD>": 0, "<UNK>": 1}

self.idx2word = ["<PAD>", "<UNK>"]

for word in glove_words:

self.word2idx[word] = len(self.idx2word)

self.idx2word.append(word)

def encode(self, sentence):

return [

self.word2idx.get(word.lower(), self.word2idx["<UNK>"])

for word in sentence.lower().split()

]

def __len__(self):

return len(self.idx2word)vocab = Vocab(glove_words)import torch

def load_glove_embeddings(filepath, vocab, embed_dim=100):

embeddings_index = {}

with open(filepath, encoding="utf-8") as f:

for line in f:

values = line.split()

word = values[0]

vector = torch.tensor([float(v) for v in values[1:]], dtype=torch.float)

embeddings_index[word] = vector

matrix = torch.randn(len(vocab), embed_dim)

matrix[0] = torch.zeros(embed_dim) # PAD = zero

for word, idx in vocab.word2idx.items():

if word in embeddings_index:

matrix[idx] = embeddings_index[word]

return matrix

glove_matrix = load_glove_embeddings(glove_path, vocab, embed_dim=100)Now, our the embedding layer of the GRU model doesn't need to be trained from scratch. It can just load the one we just built above, and use it.

import torch.nn as nn

class GRU(nn.Module):

def __init__(self, vocab_size, embed_dim, latent_dim, pretrained_embedding=None):

super().__init__()

if pretrained_embedding is not None:

self.embed = nn.Embedding.from_pretrained(pretrained_embedding, freeze=False)

else:

self.embed = nn.Embedding(vocab_size, embed_dim)

self.rnn = nn.GRU(embed_dim, latent_dim, batch_first=True)

self.fc = nn.Linear(latent_dim, latent_dim)

def forward(self, token_ids):

embedded = self.embed(token_ids)

_, hidden = self.rnn(embedded)

return self.fc(hidden.squeeze(0))from torch.nn.utils.rnn import pad_sequence

gru_glove = GRU(vocab_size=len(vocab), embed_dim=100, latent_dim=256, pretrained_embedding=glove_matrix).to(device)

optimizer = torch.optim.Adam(gru_glove.parameters(), lr=1e-3)

loss_fn = nn.MSELoss()

# Training Loop

for epoch in range(60):

gru_glove.train()

total_loss = 0

for captions, images in loader:

# Tokenize the captions

token_tensors = [torch.tensor(vocab.encode(c), dtype=torch.long) for c in captions]

token_batch = pad_sequence(token_tensors, batch_first=True, padding_value=0).to(device)

images = images.to(device)

with torch.no_grad():

_, mu, logvar = vae(images)

# Compute expected latent representation for the associated logogram

z_target = vae.reparameterize(mu, logvar)

z_pred = gru_glove(token_batch)

loss = loss_fn(z_pred, z_target)

optimizer.zero_grad()

loss.backward()

optimizer.step()

total_loss += loss.item()

print(f"Epoch {epoch+1}, Loss: {total_loss / len(loader):.4f}")torch.save(gru_glove.state_dict(), "gru_trained_with_glove_2.pth")gru_glove = GRU(vocab_size=len(vocab), embed_dim=100, latent_dim=256, pretrained_embedding=glove_matrix).to(device)

gru_glove.load_state_dict(torch.load("/kaggle/working/gru_trained_with_glove.pth", map_location=device))

gru_glove.eval().to(device)def compare_logograms_binary(

texts,

gru,

vae,

vocab,

device="cpu",

threshold=0.5,

cols=4,

figsize=(12, 8)

):

gru.eval()

vae.eval()

n = len(texts)

rows = (n + cols - 1) // cols # ceil division

plt.figure(figsize=figsize)

with torch.no_grad():

for i, text in enumerate(texts):

# Text -> tokens

token_ids = torch.tensor(

[vocab.encode(text)],

dtype=torch.long

).to(device)

# Text -> latent

z = gru(token_ids)

# Decode image

img = vae.decoder(vae.fc_decode(z)).squeeze(0).cpu()

# RGB -> grayscale

img_gray = img.mean(dim=0)

# Threshold

img_binary = (img_gray > threshold).float().numpy()

# Plot

plt.subplot(rows, cols, i + 1)

plt.imshow(img_binary, cmap="gray")

plt.title(text, fontsize=10)

plt.axis("off")

plt.tight_layout()

plt.show()Now we can compare different terms and sentences and see how related concepts result in similar logograms, even though the terms were not present in the sentences of the dataset.

texts_to_generate = ["Human", "Person", "Animal"]

compare_logograms_binary(texts_to_generate, gru=gru_glove, vae=vae, vocab=vocab, device=device)texts_to_generate = ["Before and After", "Past and Future", "Now"]

compare_logograms_binary(texts_to_generate, gru=gru_glove, vae=vae, vocab=vocab, device=device)texts_to_generate = ["Louise has questions", "Heptapods have the answer"]

compare_logograms_binary(texts_to_generate, gru=gru_glove, vae=vae, vocab=vocab, device=device)texts_to_generate = ["1, 2, 3", "One, two, three", "Message"]

compare_logograms_binary(texts_to_generate, gru=gru_glove, vae=vae, vocab=vocab, device=device, threshold=0.7)From this point, you are free to type any sentence, convert it to a logogram and decide whether it makes any sense or not, or at least if it looks cool.

While this approach improves generalization, it introduces an important caveat:

- The model becomes strongly biased toward semantic similarity as defined by GloVe.

This leads to a behavior where:

- Words with similar meanings → similar embeddings → similar latent vectors → similar logograms

However, this is not consistent with the nature of Heptapod-B, where even closely related concepts may have completely distinct logograms.

Despite not being fully aligned with the fictional language, this approach demonstrates how pre-trained linguistic knowledge can be injected into multimodal models, enabling them to operate beyond the constraints of small datasets.