| PyPI |

|

| Conda Forge |

|

| Since Release |

|

| Test Status |

|

| Release Status |

|

| Doc Status |

|

| Coverage |

|

| Developer Chat |

|

| License |

|

| Citation |

|

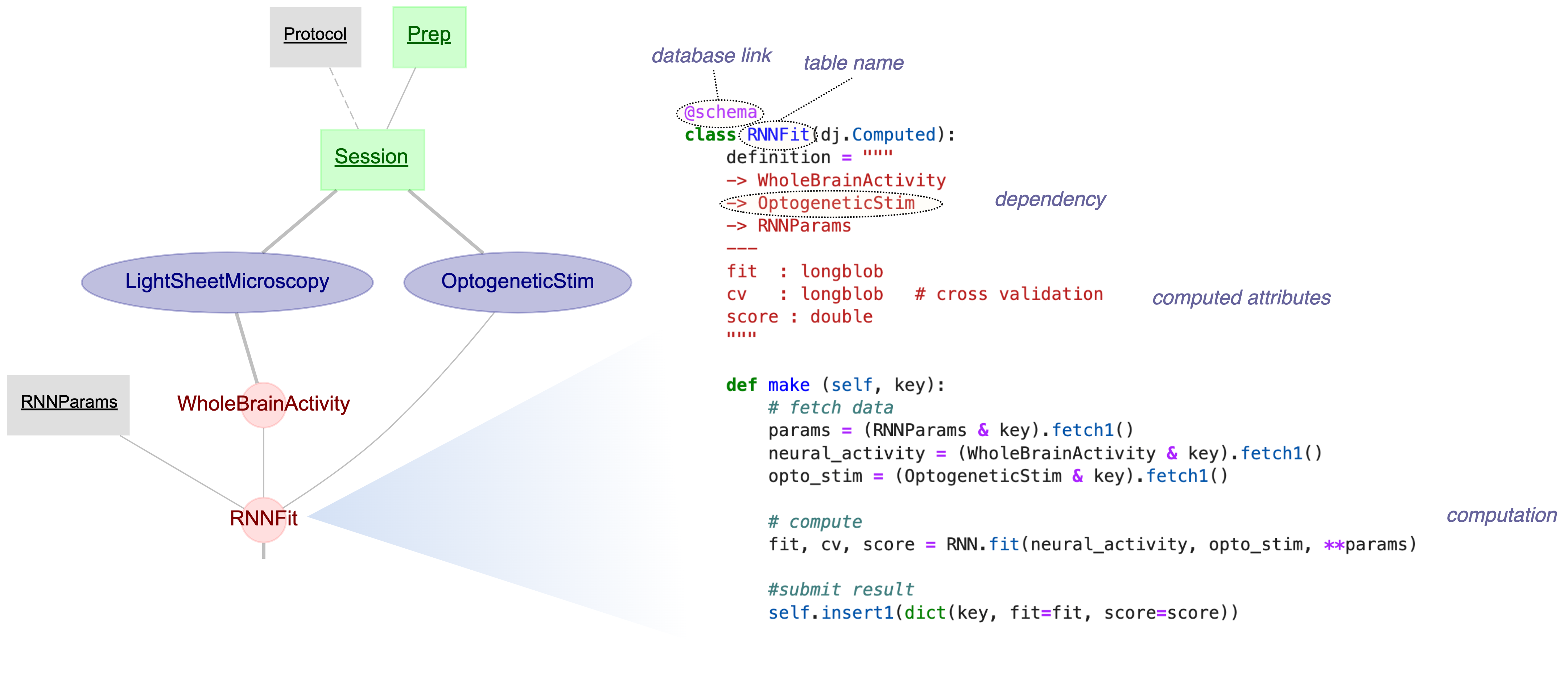

DataJoint for Python is a framework for scientific workflow management based on relational principles. DataJoint is built on the foundation of the relational data model and prescribes a consistent method for organizing, populating, computing, and querying data.

DataJoint was initially developed in 2009 by Dimitri Yatsenko in Andreas Tolias' Lab at Baylor College of Medicine for the distributed processing and management of large volumes of data streaming from regular experiments. Starting in 2011, DataJoint has been available as an open-source project adopted by other labs and improved through contributions from several developers. Presently, the primary developer of DataJoint open-source software is the company DataJoint (https://datajoint.com).

-

Install with Conda

conda install -c conda-forge datajoint

-

Install with pip

pip install datajoint

-

Interactive Tutorials on GitHub Codespaces

-

DataJoint Elements - Catalog of example pipelines for neuroscience experiments

-

Contribute

- Docker (Docker daemon must be running)

- Python 3.10+

# Clone and install

git clone https://github.com/datajoint/datajoint-python.git

cd datajoint-python

pip install -e ".[test]"

# Run all tests (containers start automatically via testcontainers)

pytest tests/

# Install and run pre-commit hooks

pip install pre-commit

pre-commit install

pre-commit run --all-filesTests use testcontainers to automatically manage MySQL and MinIO containers.

No manual docker-compose up required - containers start when tests run and stop afterward.

# Run all tests (recommended)

pytest tests/

# Run with coverage report

pytest --cov-report term-missing --cov=datajoint tests/

# Run specific test file

pytest tests/integration/test_blob.py -v

# Run only unit tests (no containers needed)

pytest tests/unit/For development/debugging, you may prefer persistent containers that survive test runs:

# Start containers manually

docker compose up -d db minio

# Run tests using external containers

DJ_USE_EXTERNAL_CONTAINERS=1 pytest tests/

# Stop containers when done

docker compose downRun tests entirely in Docker (no local Python needed):

docker compose --profile test up djtest --buildpixi users can run tests with:

pixi install # First time setup

pixi run test # Runs tests (testcontainers manages containers)Pre-commit hooks run automatically on git commit to check code quality.

All hooks must pass before committing.

# Install hooks (first time only)

pip install pre-commit

pre-commit install

# Run all checks manually

pre-commit run --all-files

# Run specific hook

pre-commit run ruff --all-files

pre-commit run codespell --all-filesHooks include:

- ruff: Python linting and formatting

- codespell: Spell checking

- YAML/JSON/TOML validation

- Large file detection

- Run all tests:

pytest tests/ - Run pre-commit:

pre-commit run --all-files - Check coverage:

pytest --cov-report term-missing --cov=datajoint tests/

For external container mode (DJ_USE_EXTERNAL_CONTAINERS=1):

| Variable | Default | Description |

|---|---|---|

DJ_HOST |

localhost |

MySQL hostname |

DJ_PORT |

3306 |

MySQL port |

DJ_USER |

root |

MySQL username |

DJ_PASS |

password |

MySQL password |

S3_ENDPOINT |

localhost:9000 |

MinIO endpoint |

For Docker-based testing (devcontainer, djtest), set DJ_HOST=db and S3_ENDPOINT=minio:9000.